Inter-Rater Reliability

Inter-Rater Reliability (IRR) measures how consistently two or more auditors record the same hand-hygiene event. You schedule a co-observation window — a date range, a facility, and an optional unit — and assign two or more auditors to it. Each auditor runs their own session inside that window, and clearPath compares the resulting sessions opportunity-by-opportunity to compute a rate-agreement score.

Strong agreement gives you confidence in your audit data. Weak agreement points to a coaching opportunity for a specific auditor — and a per-moment breakdown shows you exactly which moment to focus on.

Request for Information Compare EditionsKey Benefits

- Schedule co-observation windows

- Two or more raters per window

- Opportunity-by-opportunity comparison

- Headline rate-agreement score

- High / moderate / low interpretation

- Per-moment agreement breakdown

- Opportunity-delta sanity check

- Recompute after edits or new sessions

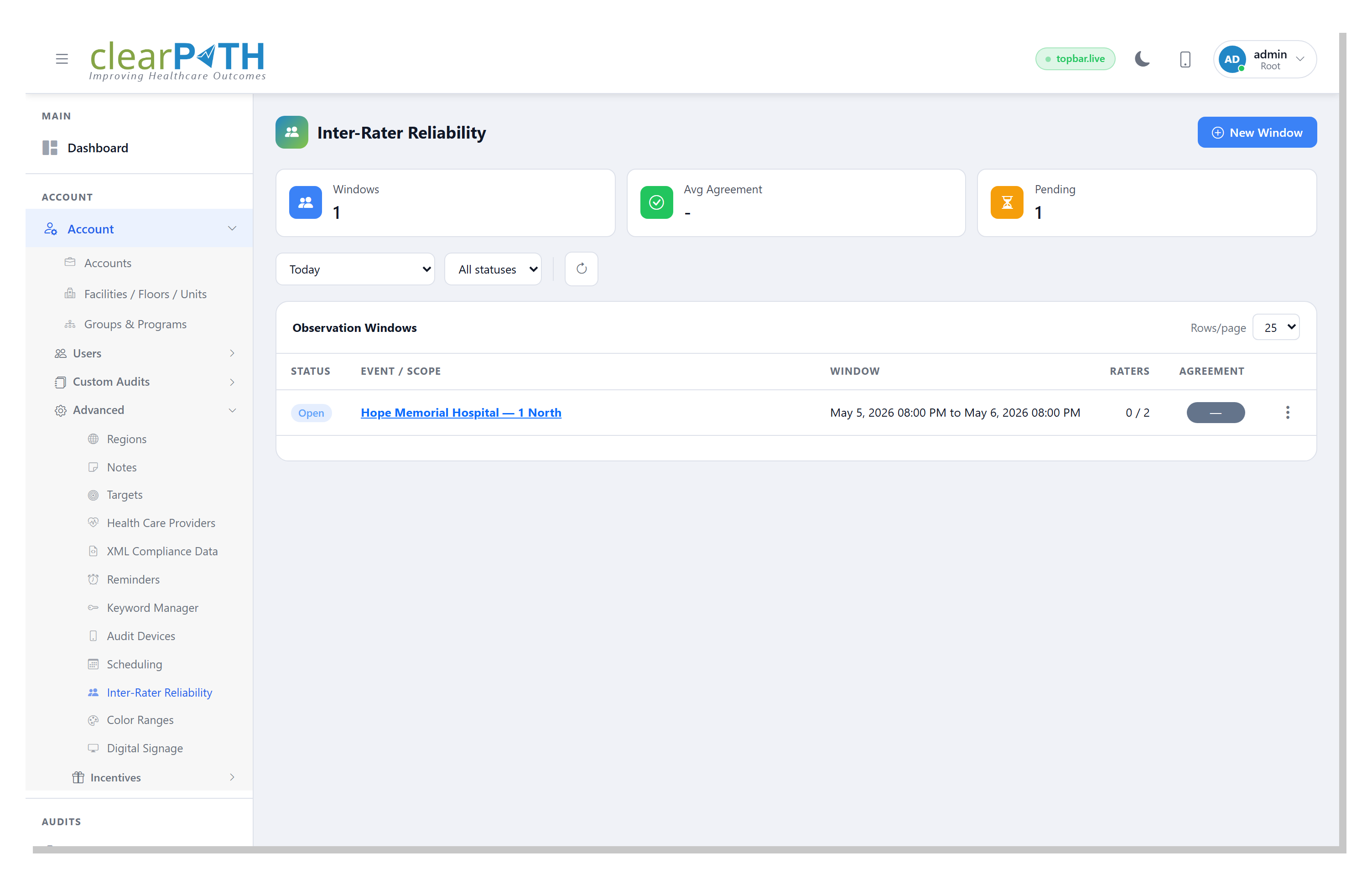

Observation Windows

The IRR list is the workspace for every co-observation window you have scheduled. Stat cards across the top show how many windows are open, the average agreement across the windows you have already computed, and how many are still pending raters or compute. Filter by time period or by lifecycle status — Open, In progress, Computed, or Closed.

- Stat chips: Windows, Avg Agreement, Pending

- Filter by time period and status

- Status pill on every row

- Assigned-to-expected rater count at a glance

- Agreement percentage pill once computed

- Recompute or delete from the row menu

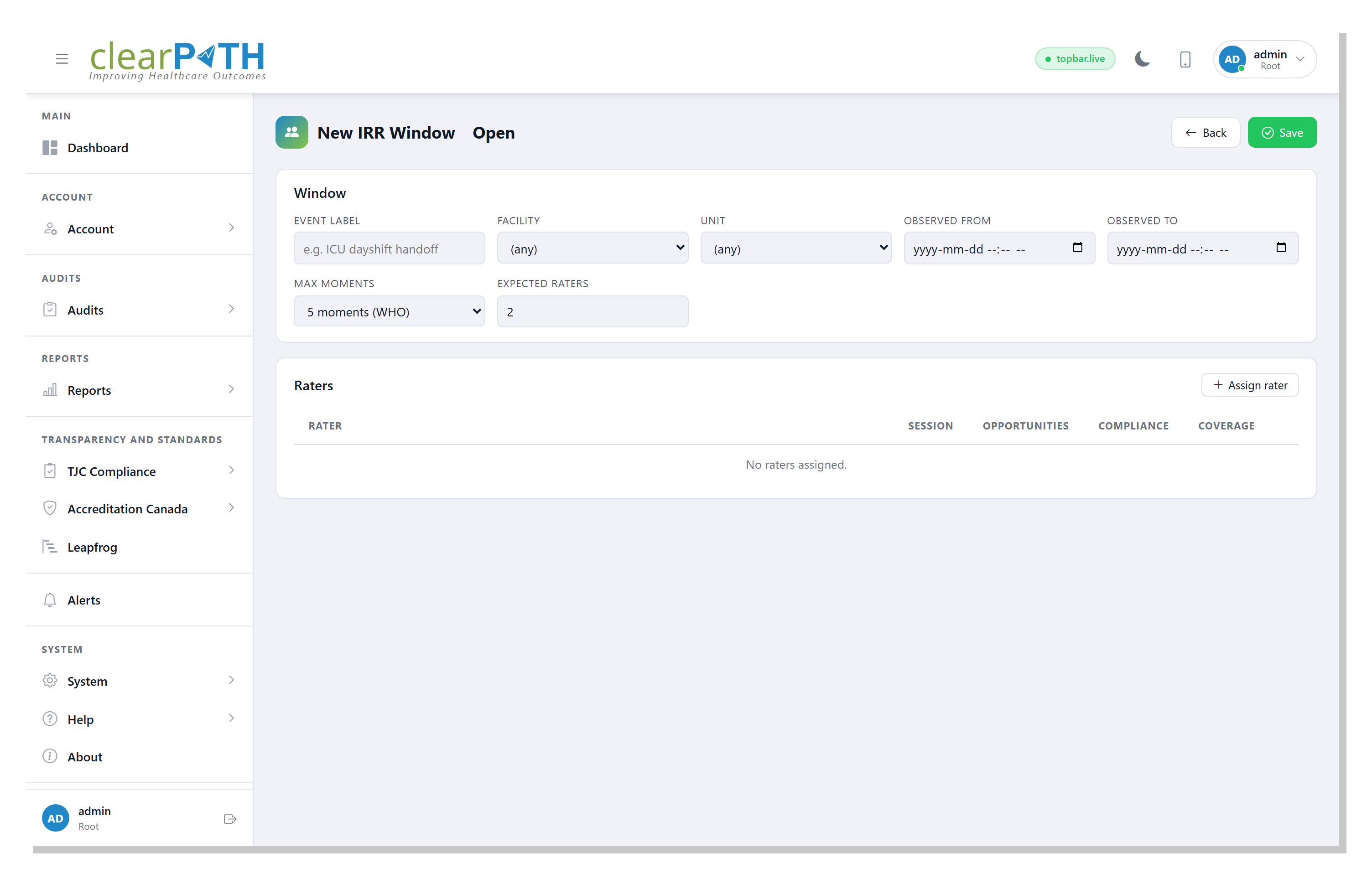

Window Editor

The window editor is split into three cards: the window definition, a headline rate-agreement metric (shown after compute), and the rater roster. The compute step compares every rater's session opportunity-by-opportunity, then breaks the result down by WHO moment so you can see which moment the raters disagreed on most.

- Event label

- Facility & unit scope

- Observation date range

- Max moments (2, 4, or WHO 5)

- Expected rater count

- Per-rater session linkage

- Per-rater coverage of the window

- Per-moment comparison table

Audit Data You Can Defend at Survey.

Inter-Rater Reliability is included on Enterprise and Ultimate editions. Calibrate your auditors, surface drift before it shows up in a board pack, and walk into every survey with audit data you can defend.

Request for Information Compare Editions